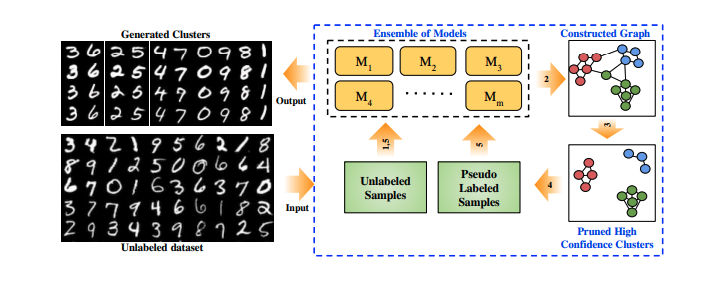

However, data augmentation for tabular data has been difficult due to the unique structure and high complexity of tabular data. In contrastive learning, data augmentation is important to generate different views. Instance-wise feature selection allows the model’s learning capacity to be focused on the. It uses sequential attention to choose a subset of meaningful features to process at each decision step. TabNet is a deep learning model for tabular learning. Our experimental results demonstrate that our simple framework brings significant performance gain under various tabular few-shot learning benchmarks, compared to prior semi- and self-supervised baselines. In the existing literature on self-supervised learning for tabular data, contrastive learning is the predominant method. Using self-supervised learning should yield better results with less training data. Moreover, we introduce an unsupervised validation scheme for hyperparameter search (and early stopping) by generating a pseudo-validation set using STUNT from unlabeled data. Self-Classifier A Self-Supervised Classification Network. We then employ a meta-learning scheme to learn generalizable knowledge with the constructed tasks. Our key idea is to self-generate diverse few-shot tasks by treating randomly chosen columns as a target label. These beginner machine learning projects consist of dealing with structured, tabular data. The success is mainly enabled by taking advantage of spatial, temporal, or semantic structure in the data through augmentation. In this paper, we propose a simple yet effective framework for few-shot semi-supervised tabular learning, coined Self-generated Tasks from UNlabeled Tables (STUNT). Self-supervised learning has been shown to be very effective in learning useful representations, and yet much of the success is achieved in data types such as images, audio, and text. To the best of our knowledge, there is no implementation of a contrastive self-supervised frame-work that incorporates both images and tabular data, which we aim to address with this work. Despite the utter importance, such a problem is quite under-explored in the field of tabular learning, and existing few-shot learning schemes from other domains are not straightforward to apply, mainly due to the heterogeneous characteristics of tabular data. erature on generative self-supervised tabular and imaging models 3 34 exists, it is limited in scope, using only two or four clinical features. Abstract: Learning with few labeled tabular samples is often an essential requirement for industrial machine learning applications as varieties of tabular data suffer from high annotation costs or have difficulties in collecting new samples for novel tasks. This significantly limits tabular self-supervised learning and hin-ders progress in this domain.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed